ECE 434 Final Project: HLS Video Streaming

Karl Swanson

Professor Schonfeld

Streaming on the Web

For many years, streaming multimedia content on the web required special server software, protocols, players, and plugins. To support all users, content producers needed to supply video in QuickTime, RealPlayer, and Windows Media Player formats. Users were constantly updating plugins and players. Videos didn't even play embedded in the web page. Users would have to download the entire video or a link to open in an external application.

In 1996, the Netscape Plugin Application Programming Interface brought multimedia into the browser. Applications such as RealPlayer were rendered inline to display content. Plugins still relied on streaming servers and applications, but video was available in the browser.

As the web became more dynamic, Flash player edged out a lead over Real and Microsoft to become the dominant web player. Flash offered content protection, ad insertion, and customizable players that ran on all major platforms. Today, Flash remains one of the most popular plugins.

In June of 2007, Apple released the iPhone and refused to support Adobe Flash. Flash was too proprietary, insecure, and power inefficient for Apple to support. Apple wanted developers to use HTML5 and HLS to deliver multimedia content. Developers followed through, by supporting the iPhone, they gained access to millions of additional customers. Apples weight and support behind HTML5 and HLS increased development momentum and adoption.

Innovations in multimedia file formats and the HTTP protocol made pseudo-streaming over HTTP possible. HTTP/1.1 added byte-range support allowing browsers to request specific parts of a file. Instead of putting meta-data at the end of videos, developers put it right at the beginning. These two innovations allowed for progressive downloading and streaming of multimedia content over HTTP. Content Delivery Networks and browsers have added these capabilities, eliminating the need for proprietary multimedia servers and plugins.

Today, browsers support native H264 video and ship with with auto-updating versions of popular plugins. The videos just work. From a users perspective, it is easier than ever to watch cat videos online.

Technical Overview

HTTP Live Streaming utilizes many industry standard tools to deliver live and previously recorded video content. There are a few general architectural components used to make things work.

Media Encoder - The Media Encoder takes a video signal and transcodes it to a H.264 stream. Audio is to the AAC codec. Audio and video streams are encoded within a MPEG Transport Stream. This container is very similar to the MPEG TS content used for DVD, Blu-ray, ATSC, and digital cable distribution. The stream is then sent to a segmenter for distribution

Stream Segmenter - The Stream Segmenter ingests the video stream. It slices the video into 1 to 10 second video files. Each file needs to have at least one keyframe placed at the beginning of the file for optimum playback.

The stream segmenter also creates and updates the m3u8 playlist files. This file points the remote media player to the different video chunks.

The main m3u8 playlist references playlists at other bitrates. The following is a playlist for the 8400 kbps stream.

#EXTM3U #EXT-X-VERSION:3 #EXT-X-TARGETDURATION:4 #EXT-X-MEDIA-SEQUENCE:0 #EXTINF:4, stream_85000.ts #EXTINF:4, stream_85001.ts #EXTINF:2, stream_85002.ts #EXTINF:2, stream_85003.ts #EXTINF:4, stream_85004.ts #EXTINF:4, stream_85005.ts #EXTINF:3, stream_85006.ts #EXTINF:2, stream_85007.ts #EXTINF:4, stream_85008.ts #EXTINF:0, stream_85009.ts #EXTINF:4, stream_850010.ts #EXTINF:2, stream_850011.ts #EXTINF:4, stream_850012.ts #EXTINF:4, stream_850013.ts #EXTINF:4, stream_850014.ts #EXTINF:0, stream_850015.ts #EXTINF:3, stream_850016.ts #EXTINF:4, stream_850017.ts #EXTINF:4, stream_850018.ts #EXTINF:1, stream_850019.ts #EXTINF:2, stream_850020.ts #EXTINF:4, stream_850021.ts #EXTINF:1, stream_850022.ts #EXTINF:0, stream_850023.ts #EXT-X-ENDLIST

Web Server - The segmented video files and playlists are served over HTTP using a standard web server. This eliminates the need for custom streaming servers and hardware. M3U8 files are distributed using the application/x-mpegURL or vnd.apple.mpegURL MIME type and the .ts files are served using the video/MP2T MIME type.

Features

Adaptive Streaming

A M3U8 playlist file can reference streams at many different bitrates. This allows devices to display the best available stream. Internet bandwidth is usually the limiting factor on video quality. If the player chooses a high bitrate stream, the picture will be great, but the video may occasionally stop to buffer. If the bitrate is too low, the video quality is low and the user experience suffers. The player needs to choose the right bitrate stream for the best user experience.

Adaptive bitrate streaming is also used to reduce buffering. Devices like the Roku will start playing videos at a lower bitrate then scale up to match the connection speed. Video quality may suffer for the first few HLS chunks, but the user doesn't have to wait. The video starts almost instantly.

Streaming providers such as YouTube Live and Livestream transcode the video into many different formats, resolutions, and bitrates to meet the needs of different players and bandwiths. These different streams are referenced in the M3U8 playlist.

This m3u8 playlist references videos at 200, 400, 600, 1200, 3500, 5000, 6500, and 8500 kbps. The video player will scale to play the appropriate file for the network connection. Many players will start at 200kbps and scale up to utilize available network bandwidth.

#EXTM3U #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=264000,RESOLUTION=416x234 stream_200.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=464000,RESOLUTION=480x270 stream_400.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=664000,RESOLUTION=640x360 stream_600.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=1296000,RESOLUTION=640x360 stream_1200.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=3596000,RESOLUTION=960x540 stream_3500.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=5128000,RESOLUTION=1280x720 stream_5000.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=6628000,RESOLUTION=1280x720 stream_6500.m3u8 #EXT-X-STREAM-INF:PROGRAM-ID=1,BANDWIDTH=8628000,RESOLUTION=1920x1080 stream_8500.m3u8

Apple HLS Encoding Recommendations

Apple provides encoding recommendations for developers encoding video for iOS devices in Technical Note TN2224. Developers may provide additional or fewer encodings, depending on the devices and customers that they serve. Netflix serves video to over 900 different devices, some using HLS. To meet demands of different bitrates and devices, Netflix encodes each video into 120 different formats.

| Connection | Resolution | Frame Rate | Total Bit Rate | Video Bit Rate | Audio Bit Rate | Audio Sample Rate | Keyframe Interval | H264 Minimum Profile |

|---|---|---|---|---|---|---|---|---|

| Cell | 416x234 | 12 | 264 | 200 | 64 | 48 | 36 | 3.0 Baseline |

| Cell | 480x270 | 15 | 464 | 400 | 64 | 48 | 45 | 3.0 Baseline |

| WiFi/Cell | 640x360 | 29.97 | 664 | 600 | 64 | 48 | 90 | 3.0 Baseline |

| WiFi | 640x360 | 29.97 | 1296 | 1200 | 96 | 48 | 90 | 3.1 Baseline |

| WiFi | 960x540 | 29.97 | 3596 | 3500 | 96 | 48 | 90 | 3.1 Main |

| WiFi | 1280x720 | 29.97 | 5128 | 5000 | 128 | 48 | 90 | 3.1 Main |

| WiFi | 1280x720 | 29.97 | 6628 | 6500 | 128 | 48 | 90 | 3.1 Main |

| WiFi | 1920x1080 | 29.97 | 8628 | 8500 | 128 | 48 | 90 | 4.0 High |

Adaptive Streaming Sample Player

Sample streaming video at different bitrates by selecting from the table above.

Now Playing - HLS Adaptive Video

Note: This demo supports and has been tested in the iOS and desktop versions of Chrome and Safari. Other browsers may not be supported.

Subtitles and Secondary Audio Tracks

Additional audio tracks and subtitles can be encoded within the HLS playlist file. This allows for localization and improves accessibility without requiring computationally intensive re-encoding for each additional language. Subtitles and audio tracks can be embedded within the MPEG-2 TS file format used within HLS, or be referenced within the playlist.

Scalability

Video files are accessed using industry standard HTTP. No additional ports need to be configured for client access. Video files can be served using globally distributed CDNs such as Amazon S3 and Akamai without using expensive streaming server software. These global CDNs can provide quick access to video content by hosting the video in multiple locations around the globe. Routers and smart DNS servers catch requests and route them to the nearest server.

There is much competition in the CDN market, driving down costs for customers. Use was limited to the large companies who could afford them. Today, anyone can afford to use CDNs to distribute content. Companies such as Amazon and Google even offer free CDN hosting to attract more customers. This project page, and the included sample videos, are hosted on Amazons S3 CDN.

Security

Companies like Amazon and Netflix need to license content to remain in business. They need to be able to provide videos to customers without giving access to save or copy and distribute licensed content. In addition to the SSL/TLS encryption used in HTTPS, HLS allows audio and videos to be encrypted using AES. This allows encrypted video to be transfered using the same scalable HTTPS. Only a player with the right encryption keys can access the videos.

Additionally, HTTP can provide authentication and access control to the video files. Unauthorized users without the right credentials, cookie, token, or key can be refused access. This gives a multi pronged approach to securing content from unauthorized access.

Examples

YouTube Live

Click image for PDF

In April of 2011, YouTube announced a live streaming service to compete with Ustream and LiveStream. Youtube had already controlled a large portion of the online video market, by offering live services customers don't need to leave to watch live events. Using Google's vast computing and network infrastructure, Google can efficiently broadcast live events to millions around the globe.

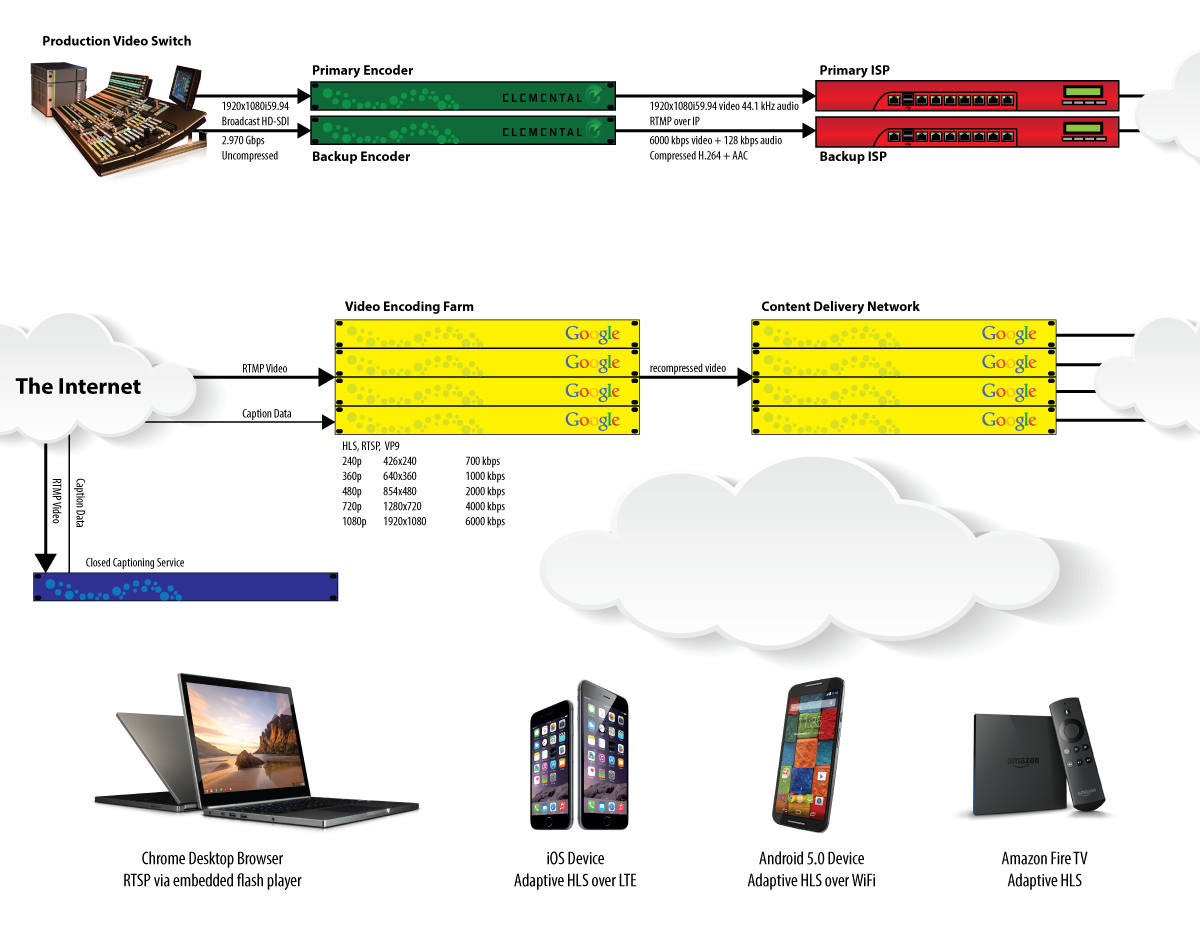

This is an example of a live streaming setup for a large event with high production quality. HD-SDI is is feed to two Elemental hardware encoders from the production company. These transcode video to a high quality H264 stream. These streams are sent to YouTube through different internet providers. In the event of an internet outage or slowdown, YouTube's encoding platform can switch to a secondary feed with minimal interruption.

The H264/HLS encoding process at YouTube adds additional delay. In the meantime, video is streamed to a Closed Captioning provider. A broadcast captioner sends realtime transcriptions back to Google for inclusion in the video stream.

The encoding farm transcodes the video into about a dozen different formats to meet the needs of customer devices and connectivity. Once transcoded, the video is transfered to the CDN for global distribution.

Live HLS streaming often requires an additional encoding stage to push content to the streaming service. In this example, the Elemental encoders encode the video to push to YouTube, but do not segment the video.

Netflix

Click image for PDF

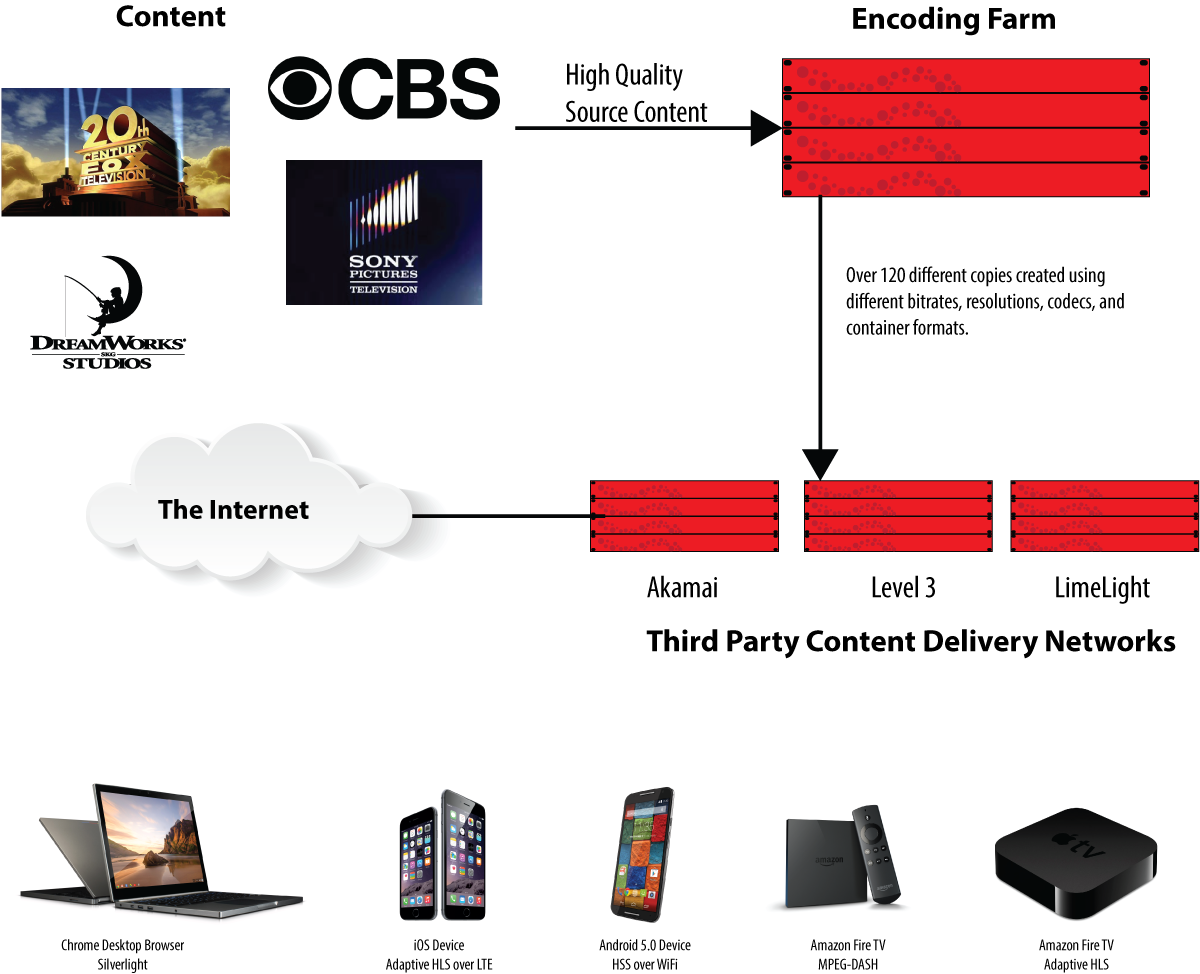

Netflix licenses content from partners such as CBS and Sony. This high quality content is transcoded to over 120 different formats and served to users over third party CDNs. Netflix uses HLS within a Video-On-Demand application. For VOD, the hls encoding and segmentation processes will often run on the same machine by the transcoding software.

Sources

- App Store Review Guidelines

- About HTTP Live Streaming

- Encoding HLS video for JW Player 6

- Best Practices for Creating and Deploying HTTP Live Streaming Media for the iPhone and iPad

- MPEG-2 Stream Encryption Format for HTTP Live Streaming

- HTTP Live Streaming Overview: Deploying HTTP Live Streaming

- HTTP Live Streaming Overview

- Timeline of Web Browsers

- Netscape Plugin Application Programming Interface

- Web 2.0

- A history of media streaming and the future of connected TV

- Hypertext Transfer Protocol -- HTTP/1.1

- To stream everywhere, Netflix encodes each movie 120 times

- What bitrate is used for each of the youtube video qualities (360p - 1080p), in regards to flowplayer?

- YouTube is going LIVE

- Encoding for streaming

- Netflix uncovered!